How To Check If Your Robots.txt File Is Working Properly

This article was updated on August 6th, 2020 at 05:57 am

Robots.txt file is used to let search engines know what file /directories to crawl and what not to. It is one f those simple things to do in SEO and many people ignore it, even today.

Even though it’s easy to install, it can get confusing if you’re running a big website. Especially, figuring out if the instructions on your Robots.txt file is working properly and URLs are being excluded or not.

One had to wait for days together and check SEPRs to confirm if your Robots.txt installation was done right. Even with a foll proof Robots.txt, due to the way your website is structured, certain files or folders can slip through and you’d only come to know when the damage is done, i.e the files/folders which were supposed to be excluded from SERPs, indexed.

But, Google has a solution to this problem.

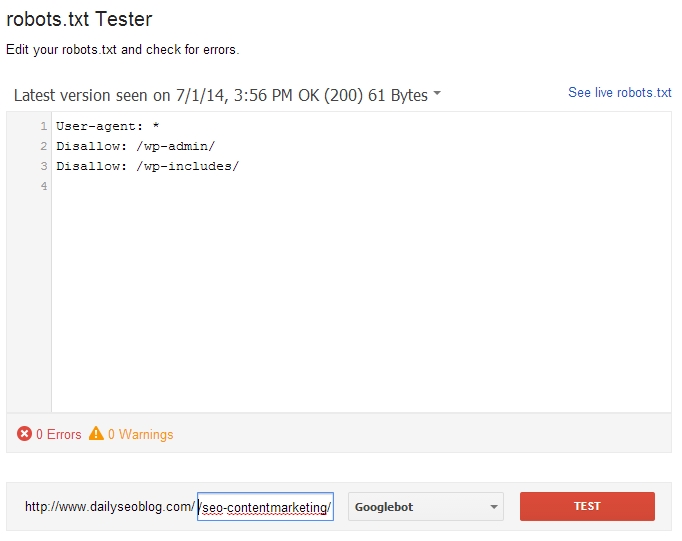

It has launched a new tool, in Google Webmasters Tool, that will let you check your Robots.txt file and confirm how it would block/unblock files and folders.

You can find it here (Robots.txt checking tool).

Here you’ll see the current robots.txt file, and can test new URLs to see whether they’re disallowed for crawling. To guide your way through complicated directives, it will highlight the specific one that led to the final decision. You can make changes in the file and test those too, you’ll just need to upload the new version of the file to your server afterwards to make the changes take effect.

How to check if a URL is being indexed by Google/blocked by RObots.txt or not?

If you want to check if a particular URL is being blocked from being indexed by Google, copy paste the URL to the Robots.txt testing tool here, and click the “Test” button.

If the file is allowed, the button would turn green notifying “Allowed” and if not red notifying “Disallowed”.

You can check your live Robots.txt file from here as well and ensure everything is working properly.